Followup to: The Basic Double Crux Pattern

Intro

This is a continuation of my previous post, in which I outlined the what I currently see as the core pattern of double crux finding. This post will most be an example of that pattern in action.

While this example will be more realistic than the intentionally silly example in the last post, it will still be simplified, for the sake of brevity and clarity. There’s a tradeoff between realism and clarity of demonstration. The following conversation is mostly-fictional (though inspired by Double Cruxes that I have actually observed). It is intended to be realistic enough that this could have been a real conversation, but is also specifically demonstrating the basic Double Crux pattern, (as opposed to other moves or techniques that are often relevant.)

Review

As a review, the key conversational moves of Double Crux are…

- Finding a single crux:

- Checking for understanding of P1’s point.

- Checking if that point is a crux for P1.

- Finding a double crux:

- Checking P2’s belief about P1’s crux.

- Checking if P2’s crux is also a crux for P1.

As an excise, I encourage you to go through the example below below and track what is currently happening in the context of this frame: Which parts of the conversation correspond to which conversational move?

Note that, in general, participants will be intent on saying all kinds of things that aren’t cruxes, and part of the facilitator’s role is to keep the conversation on track, while also engaging with their concerns.

Example: Carol and Daniel on gun control legislation

[Important note: This example is entirely fictional. It doesn’t represent the views, of anyone, including me. The arguments that these two character’s make are constructions of arguments that I have heard from CFAR participants, and things that I heard in podcasts years ago, and things I made up. I have no idea if these are in fact the most important considerations about gun control, or if the facts that these characters reference are actually true, only that they are plausible positions that a person could hold.]

Two people, Carol and Daniel, are having a discussion about politics. In particular, they’re discussing new gun laws that would heavily restrict citizens ability to buy and sell firearms. Carol is in favor of the laws, but Daniel is opposed.

Facilitator: Daniel, do you want to tell us why you think this law is a bad idea?

Daniel: Look every time there’s a mass shooting, everyone gets up in arms about it, and politicians scramble over each other to show how tough they are, and how bad an event the shooting was, and then they pass a bunch of laws that don’t actually help, and add a bunch of red tape for people who want to buy firearms for legitimate uses.

Facilitator: Ok. There were a lot of things in there, but one part of is you said that these laws don’t work?

Daniel: That’s right.

Facilitator: you mean that they don’t reduce incidence of mass shooting?

Daniel: Yeah, but mass shootings are almost a rounding error.

Facilitator: [suggesting an operationalization] So what do you mean when you say they don’t help? That they don’t reduce the murder rate?

Daniel: Yeah. That’s right.

Facilitator: Ok. If you found out that this law in particular did reduce the US murder rate, would you be in favor of it?

Daniel: Well, it would have to have a significant impact. If the effect is so small as to be basically unnoticeable, then it probably isn’t worth the constraint on millions of people across the US.

Facilitator: Ok. But if you knew that this law would “meaningfully” reduce the murder rate, would you change your mind about this law? (Where meaningfully means something like “more than 5%”?)

Daniel: Yeah, that sounds right.

Facilitator: Great. Ok. So it sounds like whether this law would impact the murder rate is a crux for you.

Carol, do you think that this law would reduce the murder rate?

Carol: Yeah.

Facilitator: By more than 5%?

Carol: I’m not sure by how much specifically, but something like that.

Facilitator: Ok. If you found it that this law would actually have no effect (or close to no effect) on the murder rate would you change your mind?

Carol: I think it would reduce the murder rate.

Facilitator: Cool. You currently think that this law would reduce the murder rate. I want to talk about why you think that in a moment. But first, I want to check if it’s a crux. Suppose you found really strong evidence that this this law actually wouldn’t reduce the murder rate at all (I realize that you currently think that it will), would you still support this law?

Carol: Yeah. I guess there wouldn’t be much of a point to it in that case.

Facilitator: Cool. So it sounds like this is a double Crux. You, Daniel, would change your mind about this law if you found out that it would improve the murder rate, substantially, and you, Carol, would change your mind, if you found out it wouldn’t improve the murder rate. High-five!

The participants high-five, and write down their cruxes.

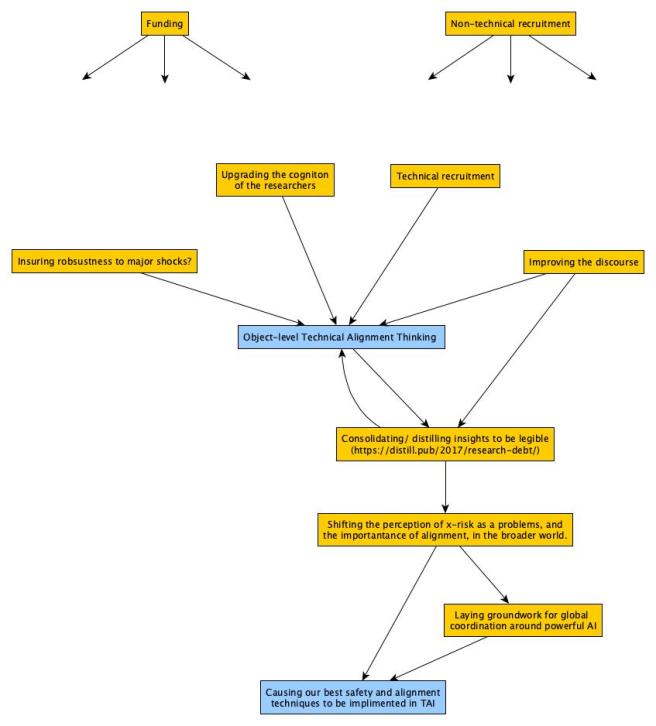

[insert picture]

Facilitator: Alright. So it sounds like we should talk about this double crux, why do each of you think that this would improve the murder rate?

Carol: Well, I’m aware of other countries that implemented similar reforms, and it did in fact improve incidents of violent crime in those countries.

Facilitator: Ok. So the reason why you think that this law would reduce the murder rate is that it worked in other countries?

Carol: That’s right.

Facilitator: Ok. Suppose you found out that actually laws like this did not help in those other countries? Would that change your mind about how likely this reform is to work in the US?

Carol: I’m pretty sure that it did work. In Sweden, for instance.

Facilitator: Ok. Suppose you found out that the evidence in those countries, like Sweden, didn’t hold up, or it turns out you misread the studies or something.

Carol: If all those studies turned out to be bunk…Yeah. I guess that would cause me to change my mind.

Facilitator: Cool. So it sounds like that’s a crux for you.

Daniel, presumably, you think that laws like this don’t lower the murder rate in other countries. Is that right?

Daniel: Well, I don’t know about those studies in particular. But I’m generally skeptical here, because this topic is politicized. I think you can’t rely on most of the papers in this area.

Facilitator: Ok. Is that a crux for you?

I don’t think so. There are a lot of gun law interventions that are effective in other countries, but don’t work in the US, because there are just so many more guns in the US, than in other countries in the world. Like, because there are so many guns already in circulation, making it harder to buy a gun today, makes almost no difference for how easy it is to get your hands on a gun. In other countries where there are fewer weapons lying around, things like gun buybacks, or restrictions on gun purchases, have more of an effect, because the size of the pool of the existing guns is larger, and so those interventions have a much smaller proportional impact.

Like, you have to account for the black market. There’s a big black market in weapons in the US, much bigger than in most European countries, I think.

Facilitator: [Summarizing] Ok. It sounds like you’re saying that you don’t think making harder to buy guns has an impact on the murder rate because, if you want to kill someone in the US, you can get your hand on a gun one way or another.

Daniel: Yeah.

Facilitator: Ok. Is that a crux for you? Suppose that you found out that, actually reforms like this one do make it much harder for would-be criminals to get a gun. Would that change your mind about whether this reform would shift the murder rate?

Daniel: Let’s see…I might want to think about it more, but yeah, I think if I knew that it actually made it harder for those people to get guns, that would change my mind, but it would have to be a lot harder. Like, it would have to be hard enough that those people end up just not having guns, not like they would have to wait an extra 2 weeks.

Facilitator: Ok. Cool. If laws like this made it so that fewer criminals had guns, you would expect those laws to improve the murder rate?

Daniel: yeah.

Facilitator: Carol, do you expect that this new gun law would cause fewer criminals to own guns?

Carol: Yeah. But it’s not just the guns. The other thing is the ammunition. This law would not just make it harder to buy guns, but would make it harder to buy bullets. It doesn’t matter how criminals got their guns (they might have inherited them from their grandmother, for all I know), if they can’t buy bullets for those guns.

Facilitator: OH-k. That’s pretty interesting. You’re saying that the way that you limit gun violence is by restricting the flow of ammunition.

Carol: Exactly.

Daniel: Huh. Ok. I hadn’t thought about that. That does seem pretty relevant.

Facilitator (to Daniel): Is that a crux for you? If this law makes it harder for criminals to get bullets, would you expect it to impact the murder rate?

Daniel: Yeah. I hadn’t thought about that. Yeah, if would-be murderers have trouble getting ammunition, then I would expect that to have an impact on the murder rate. But I don’t know how they get bullets. It might be mostly via black market channels that aren’t much effected by this kind of regulation. Also, it maybe that it’s similar to guns, in that there are already so many bullets in circulation, that it

Facilitator (to Daniel): Cool. Let’s hold off on that question until we check if it is also a crux for Carol.

Facilitator (to Carol): Is that a crux? Suppose that you found out that criminals are able to get their hands on ammunition via “unofficial”, illegal methods. Would that change your mind about how likely this policy is to reduce the murder rate in the US?

Carol: Let me think.

. . .

I’m still kind of suspicious. I would want to see some hard data of states or countries that have the a similar gun culture to the US, and also have this sorts of restrictions-

Daniel [Interrupting]: Yeah, I would obviously want that too.

Carol: …but in the absence of evidence like that, then it makes sense that if criminals get guns and ammo via illegal channels, then a reform that makes it harder to buy weapons wouldn’t have any impact on the murder rate.

Facilitator: Ok. Awesome. So it sounds like we have a Double Crux, “would this regulation limit the availability of ammunition for would-be killers.” Separately, you Carol, have a single crux about “Would this make it harder for criminals to buy guns?” We could talk about that, but Daniel doesn’t think it’s as relevant.

Carol: No. I’m excited to just talk about the ammo for now.

Facilitator: Cool. That also makes sense to me.

And maybe underneath “Would this regulation limit the availably of ammunition to would-be killers”, there’s another question of “How do criminals mostly get their ammunition?” If they get their ammo from sources that are relevantly affected by these sorts of restrictions, then we expect it to restrict the flow of ammo to criminals. But if they have a stockpile, or get it from the black market (which get’s it from other countries, or something), then we wouldn’t expect the availability of ammo for criminals to be impacted much.

Does that sound right?

[Nods]

Facilitator: Ok then. Cool. I think you guys should high five.

Carol and Daniel high five, and the write the new cruxes on their piece of paper.

[image]

Facilitator: Also, it sounds like there was maybe another Double Crux there? That is, if there was evidence from a country or state that tried a policy like this one, and also had a similar number of guns in circulation?

Does that sound right?

Daniel: Yeah.

Carol: Yep.

Facilitator: Ok. That just seems good to note, even if it isn’t actionable right now. If either of you encounter evidence of that sort, it seems like it would be relevant to your beliefs.

But it seems like the most useful thing to do right now is to see if we can figure out how criminals typically get ammunition.

Daniel and Carol researched this question, found a bunch of conflicting evidence, but settled on a shared epistemic state about how most criminals get their ammo. One or both of them updated, and they lived with a more detailed model of this small part world ever after.

Discussion

Hopefully this example gives a flavor of what a Double Crux conversation is (often) like.

Again, I want to point out that this example uses the person of the facilitator for convenience. While I do usually recommend getting a third person to be a facilitator, it is also totally possible for one or both of the conversational participants to execute these moves and steer the conversation.

This conversation is specifically intended to demonstrate the basic Double Crux pattern (although the normal order was disrupted a bit towards the end of the conversation), but nevertheless, we see some examples of other principles or techniques of productive disagreement resolution.

Distillation: As is common, Daniel said a lot of things in his opening statement, not all of which are relevant, or cruxes. One of the things that the facilitator needs to do is draw out the key point or points from all of those words, and then check if those are crucial. Much of the work of Double Crux is drawing out the key points each person is making, so you can check if they are cruxes.

Operationalizing: This particular example was pretty low on operationalization, but nevertheless, early on, the conversation settled on the operationalization of “reducing the murder rate”.

Persistent crux checking: Every time either Carol, or Daniel made a point, the facilitator would ask them if it was a crux.

Signposting: The facilitator frequently states what just happened (“It sounds like that’s a crux for you.”). This is often surprisingly helpful to do in a conversation. The facilitator

Distinguishing between whether a proposition is a crux, and what the truth value of a crux is: One thing that you’ll notice is that several times, the facilitator asks Carol if some proposition “A” is a crux for her, and she responds by saying, in effect, “I think A is true”. This is a fairly common thing that happens, even for quite smart people. It is important to make mental space between the questions “is X a crux for Y?”, and “is X true?”. It is often very tempting to jump in and refute a claim, before checking if it is a crux. But it is important to keep those questions separate.

False starts: There were several places where the facilitator checked for a Double Crux, and “missed”. This is natural and normal.

This conversation was weird on in that most of the considerations named were cruxes for someone. People are often inclined to share consideration, that upon reflection, they agree don’t matter at all.

Conversational avenues avoided: This is maybe the key value add of Double Crux. For every false start, there was a long, and ultimately irrelevant conversation that could have been had.

It might have been tempting for Daniel to argue about whether gun control regulation actually worked in other countries. (It is just sooo juicy to tell people exactly how they are wrong, when they’re wrong.) But that would have been a pointless exercise, (for Daniel’s belief improvement, at least), because Daniel thinks that the data from other countries is irrelevant. At every point they stay on a track that both parties consider promising for updating.

(Of course, sometimes you do have information that is relevant to your partner’s crux, but not to your own, and it is good and proper to share that info with them.)

Inside view / outside view separation: Towards the end, we saw Carol distinguishing between outside view reasoning based on empirical data, and inside view reasoning based on thinking through probable consequences. She clearly separated these two, for herself. I think this is sometimes a useful move, so that one can feel psychologically permitted to do blue-sky reasoning, without forgetting that you would defer to the empirical data over and above your theorizing.

Conclusion

I also again want to clarify my claims:

- I am not claiming that this pattern solves all disagreements.

- I am not claiming that this is the only thing a person should do in conversation.

- I am not claiming that one should always stick religiously to this pattern, even when Double Cruxing. (Though it is good to try sticking religiously to this pattern as a training exercise. This is one of the exercises that I have people do at Double Crux trainings.)

I am saying…

- This is my current best attempt to delineate how finding double cruxes usually works, and

- I have found double cruxes to be extremely useful for productively resolving disagreements.

Hope this helps. More to come, soon probably.

[As always, I’m generally willing to facilitate conversations and disagreements (Double Crux or not) for rationalists and EAs. Email me at eli [at] rationality [dot] org if that’s something you’re interested in.]